- Stock: In Stock

- Product code: G1-D-Flagship

- Weight Brutto: 80.00kg

The Unitree G1-D Flagship is a wheeled humanoid service robot engineered for sustained commercial deployment, combining dual 7 DoF arms, an adjustable-height telescoping column spanning 1260 to 1680 mm, and an NVIDIA Jetson Orin NX processor delivering 100 TOPS of on-board AI inference. Weighing approximately 80 kg with its dual-battery architecture, the platform sustains up to 6 hours of chassis autonomy and achieves a maximum operational reach of ~2 m.

| Total DoF (excl. End Effector) | 19 (7×2 arms + 2 waist + 1 column + 2 base) |

|---|---|

| Adjustable Height Range | 1260–1680 mm (max working height ~2 m) |

| AI Computing Module | NVIDIA Jetson Orin NX — 100 TOPS |

| Chassis Battery Life | ~6 h (30 Ah integrated battery) |

Unlike fully bipedal humanoids that spend engineering budget on dynamic balance, the G1-D Flagship redirects that effort entirely into arm capability and task intelligence. The wheeled differential-drive chassis removes bipedal stabilisation complexity, enabling the upper body to focus on dexterous manipulation and perception. The image below captures the G1-D operating as an autonomous barista — managing espresso equipment with calm precision, a scenario that demands both careful object handling and reliable positional repeatability.

Telescoping Column: From Floor Level to a 2 m Working Envelope

The most consequential mechanical choice in the G1-D's design is its telescoping lifting column. The column travels 450 mm of vertical stroke at up to 60 mm/s, controlled to 1 mm positioning accuracy. At minimum height the robot stands 1260 mm tall — compact enough to handle floor-level tasks and fit through standard doorways. At maximum extension it reaches 1680 mm, pushing the arm workspace up to ~2 m above the ground. That range covers virtually every shelf height found in retail, warehouse, and laboratory environments. The waist simultaneously articulates across Z±155° and Y -2.5° to +135°, allowing the arms to sweep from below the chassis to well above head height without repositioning the chassis.

The diagram below illustrates both the vertical stroke numbers and the full waist range of motion that together define the G1-D's expanded operational workspace — showing the robot handling a standard shipping carton at conveyor height with a single-arm grasp.

7 DoF Arms and a Modular End Effector Ecosystem

Each arm carries 7 active degrees of freedom: shoulder pitch, shoulder roll, shoulder yaw, elbow, wrist roll, wrist pitch, and wrist yaw. Seven-DoF arms are the gold standard in professional manipulation research precisely because they allow continuous null-space motion — the robot can reorient the wrist without moving the end effector, which matters enormously in confined workspaces. With a single-arm payload of ~3 kg and a reach of ~0.45 m, the arms handle object weights encountered in retail, food service, logistics, and light assembly.

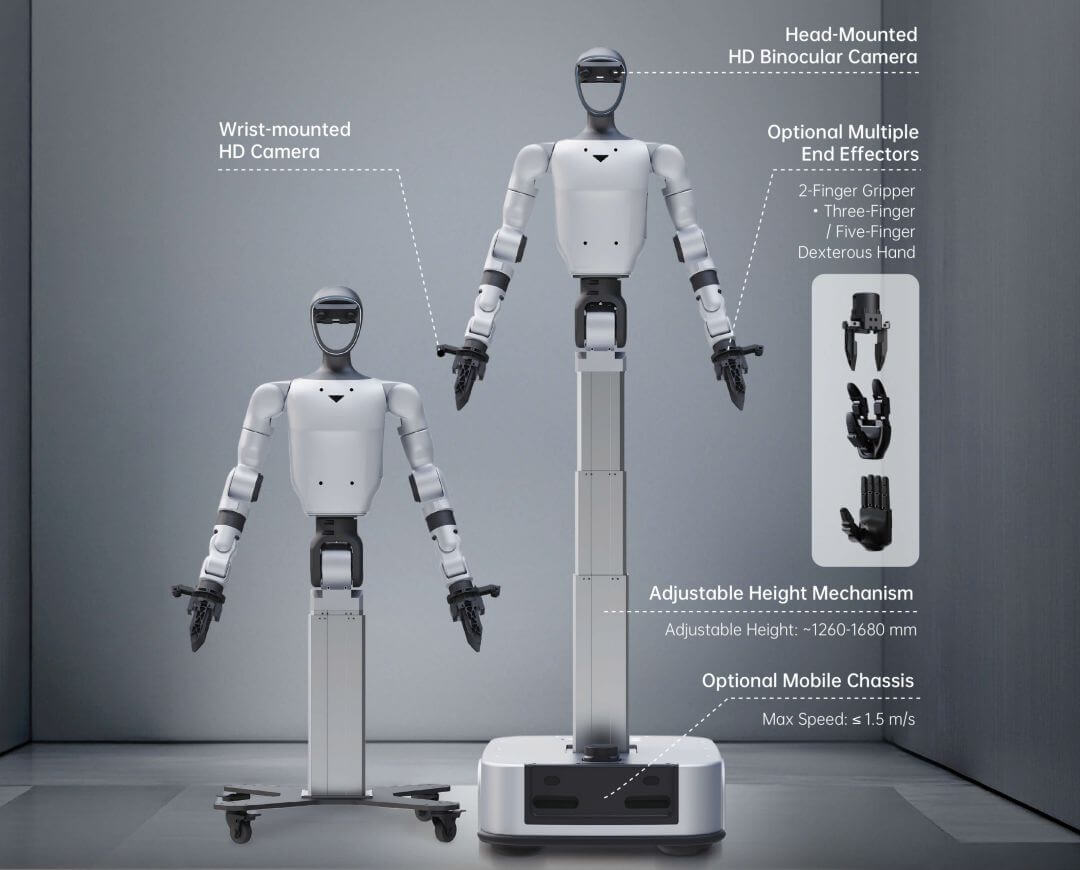

The end effector is intentionally not fixed. Four hardware options are available depending on the application: a 2-finger force-controlled gripper for general object handling, a 3-finger dexterous hand without tactile sensing, a 3-finger dexterous hand with tactile sensing for tasks requiring contact feedback, and a 5-finger dexterous hand for the most human-like manipulation requirements. The annotated diagram below shows both height configurations and the full range of compatible end effectors in context.

19 DoF Platform: The Kinematic Architecture Explained

The body's 19 total degrees of freedom (excluding end effectors) are distributed with deliberate precision. The arms account for 14 DoF (7 per arm), giving each limb the same kinematic redundancy found in professional collaborative robot arms. Two waist DoF — rotation about the Z-axis and pitch about the Y-axis — allow the torso to twist and bend independently, decoupling arm positioning from chassis heading. One column DoF handles vertical height adjustment. Two base DoF correspond to the chassis differential drive: forward/backward velocity and yaw angular velocity. When a 2-finger gripper is added to each arm, the total rises to 21 DoF.

The platform specification diagram below summarises the complete DoF breakdown per subsystem, confirming that the arm architecture alone — at 7 DoF per limb — matches the kinematic capability of a standalone industrial collaborative arm.

Lower-Latency Control: VR Teleoperation and Precision Positioning

High-fidelity teleoperation is the primary mechanism for collecting demonstration data that trains autonomous policies. The G1-D's control pipeline delivers a system teleoperation latency of <100 ms with a 60 Hz sampling rate — fast enough for a human operator wearing a VR headset to maintain a convincing sense of embodiment during dexterous manipulation. Column positioning accuracy under VR teleoperation reaches ±0.5 mm; end-effector gripper accuracy is ±0.1 mm (accuracy varies with end-effector configuration). These tolerances matter for tasks like picking small components, inserting connectors, or folding flexible materials where imprecise reproduction would invalidate the training demonstration.

The image below shows an operator performing VR-guided teleoperation alongside the G1-D, illustrating the system's key control response parameters that make high-quality data collection practical at scale.

Wheeled Chassis and Autonomous SLAM Navigation

The mobile base operates on a differential-drive with two independent drive wheels, supporting 360° in-place rotation and a maximum travel speed of 1.5 m/s. Onboard chassis sensors include a 3D LiDAR, two depth cameras, two physical collision sensors, and two low-obstacle detection sensors — a sensing suite that enables the robot to build maps, localise itself, avoid dynamic obstacles, and autonomously return to its charging station. Navigation is managed through a SLAM service accessible via REST API, with support for points of interest, virtual walls, forbidden zones, and multi-point route sequencing. The robot's integrated 30 Ah battery powers the chassis for approximately 6 hours before docking is required. The image below shows the G1-D performing a folding task in a domestic bedroom — an illustrative scenario where the chassis navigates to the workspace and the column adjusts to precisely match the surface height.

End-to-End AI Platform: From Data Acquisition to Deployed Policy

The G1-D is not simply a robot — it is the physical execution node of a complete embodied AI development stack. Unitree's platform integrates three interconnected layers: a streamlined data acquisition pipeline, a comprehensive model training and inference environment, and the UnifoLM-WMA-0 world-model–action architecture. The data pipeline standardises collection across multiple robot platforms using visual template management, one-click task generation, high-concurrency scheduling for hundreds of simultaneous robots, and 24/7 continuous collection — all feeding directly into mainstream training formats.

The image below shows the G1-D operating on an industrial assembly line alongside multiple identical units — a high-throughput data collection scenario enabled by the platform's concurrent architecture.

UnifoLM-WMA-0: World-Model–Action Architecture

The model training layer supports distributed training with up to 90% GPU utilisation, integration with open-source models including PI and GROOT, one-click model deployment, and a high-fidelity simulation environment for policy evaluation before physical rollout. At the core is UnifoLM-WMA-0 — Unitree's open-source world-model–action architecture spanning multiple robot embodiments. It operates in two modes: a decision-making mode that predicts future physical interactions to guide policy execution, and a simulation mode that generates high-fidelity synthetic training data from robot motion inputs. The full Sim2Real pipeline is documented and supported, covering architecture selection, training configuration, real-time monitoring, parameter editing, simulation testing, and model deployment.

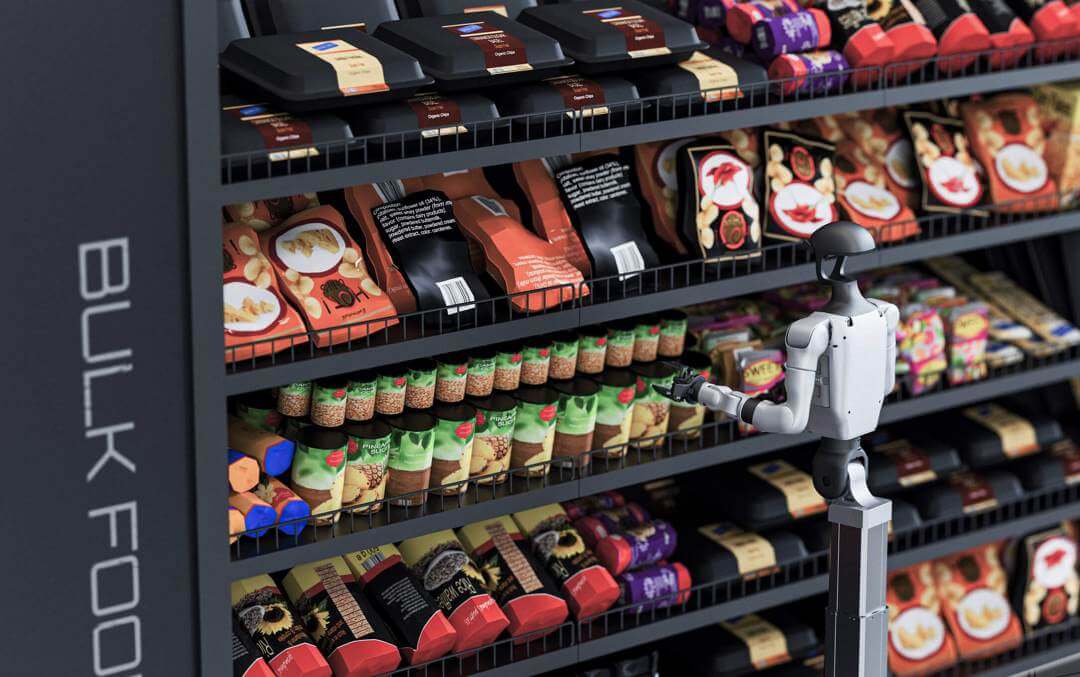

Service, Retail, and Industrial Inspection Use Cases

The G1-D's variable reach and SLAM autonomy make it practically deployable across three main verticals. In retail environments, the robot can navigate store aisles, identify shelf positions using onboard cameras, and restock products without human assistance. The image below shows the G1-D handling packaged goods on a bulk food shelf — a task that demands accurate object identification, controlled force application, and reliable spatial positioning.

In industrial and data-centre contexts, the G1-D's ability to navigate crowded aisles, extend its column to reach high rack positions, and apply controlled force through its arms makes it a viable tool for equipment inspection, cable management, and component handling. The image below shows the platform working in a server room — a space characterised by narrow aisles, vertically stacked hardware, and the need for very precise, non-contact-safe arm movements.

Technical Specifications of the Unitree G1-D Flagship

Mechanical Dimensions

| Model | G1-D Flagship |

|---|---|

| Overall Dimensions (Min. Column Height) | ~1260 × 525 × 570 mm |

| Overall Dimensions (Max. Column Height) | ~1680 × 525 × 570 mm |

| Total Weight (incl. battery) | ~80 kg |

| Cooling System | Local air cooling |

Degrees of Freedom

| Total DoF (excl. End Effector) | 19 |

|---|---|

| Single Arm DoF (excl. End Effector) | 7 |

| Waist DoF | 2 |

| Column DoF | 1 |

| Base DoF | 2 |

| Total DoF with 2-Finger Gripper ×2 | 21 (19 + 1 per gripper × 2) |

Arm Performance

| Max. Single Arm Payload | ~3 kg |

|---|---|

| Arm Reach (excl. End Effector) | ~0.45 m |

| End Effector Options | 2-Finger Gripper / 3-Finger Dexterous Hand (No Tactile) / 3-Finger Dexterous Hand (With Tactile) / 5-Finger Dexterous Hand |

Column & Waist Range of Motion

| Column Lifting Travel | 450 mm |

|---|---|

| Column Lifting Speed | Max. 60 mm/s |

| Column Lifting Accuracy (general) | 1 mm |

| Lifting Accuracy (VR teleoperation) | ±0.5 mm |

| Max. Working Height | ~2 m |

| Waist Joint Range — Z-axis | ±155° |

| Waist Joint Range — Y-axis | -2.5° to +135° |

Chassis Performance

| Chassis Dimensions (L × W × H) | 570 × 525 × 197 mm |

|---|---|

| Drive Type | Differential drive — supports 360° in-place rotation |

| Maximum Mobility Speed | 1.5 m/s |

| Chassis Sensors | LiDAR ×1 + Depth Camera ×2 + Physical Collision Sensor ×2 + Low-Obstacle Detection Sensor ×2 |

Computing & AI

| Basic Computing Power | 8-core high-performance CPU |

|---|---|

| High-Performance Computing Module | NVIDIA Jetson Orin NX 16 GB (100 TOPS) |

Sensors & Perception

| Head HD Binocular Camera | ×1 — FOV: H 115°, V 80°, D 125° — Resolution: 3840 × 1200 |

|---|---|

| Wrist HD Camera | ×2 — FOV: H 130°, V 60°, D 160° — Resolution: 1920 × 1080 |

| Base LiDAR | ×1 |

| Base Depth Camera | ×2 |

| Physical Collision Sensor (Base) | Present |

| Low-Obstacle Detection Sensor (Base) | Present |

Audio & Interaction

| Microphone Array | 4-mic linear array, 20 mm spacing |

|---|---|

| Speaker | 8 Ω 3 W (5 W peak) |

| RGB Light Strip | 256 colors |

| ASR (Speech Recognition) | Local offline model |

| TTS (Text-to-Speech) | Local offline synthesis — Chinese & English |

Connectivity

| WiFi | WiFi 6 |

|---|---|

| Bluetooth | Bluetooth 5.2 |

Control & Teleoperation

| System Teleoperation Latency | <100 ms |

|---|---|

| Sampling Rate | 60 Hz |

| End-Effector Gripper Accuracy | ±0.1 mm (varies with end-effector configuration) |

Power & Battery — Upper Body

| Upper Body Power Supply | Battery or direct cable connection |

|---|---|

| Upper Body Battery Capacity (quick-release) | 9000 mAh |

| Upper Body Battery Life | ~2 h |

| Upper Body Charger | 54 V / 5 A |

Power & Battery — Chassis

| Chassis Power Supply | Battery / charging station |

|---|---|

| Chassis Battery Capacity (integrated) | 30 Ah |

| Chassis Battery Life | ~6 h |

| Chassis Charging Station | 51 V / 10 A |

Software & Development

| SDK | unitree_sdk2 (based on G1 SDK) |

|---|---|

| SLAM Navigation | Yes — REST API, supports POIs, virtual walls, forbidden zones, multi-point routes |

| AI World Model | UnifoLM-WMA-0 (open-source) |

| Supported Open-Source Models | PI, GROOT and community datasets |

| Distributed GPU Training Utilisation | Up to 90% |

| Video Streaming |

ZMQ / WebRTC (head b

Robot Specifications

Navigation & Sensors

LiDAR

Depth Camera: 2

Physical Collision Sensor: 2

Low-Obstacle Detection Sensor: 2

Robot Type

Humanoid

Application / Purpose

Service/Hotel/Restaurant/Cafè

Max Payload (kg)

3

Max Travel Speed (m/s)

1.5

Battery Life (h)

6

Computing Module

NVIDIA Jetson Orin NX 16GB(100TOPS)

SDK / Secondary Development

Yes

Weight and Dimensions

Gross Weight (kg)

80

Recently viewed products

Brand: Unitree

Product code: G1-D-Flagship

The Unitree G1-D Flagship is a wheeled humanoid service robot

engineered for sustained commercial deployment, combining dual

7 DoF arms, an adjust..

59 000€

Ex Tax:48 760€

Brand: Unitree

Product code: G1-COMP-EDU

The Unitree G1-Comp EDU is a full-size bipedal humanoid robot

engineered specifically for competitive robotics and advanced university

research, s..

46 900€

Ex Tax:38 760€

Brand: Formlabs

Product code: 00-00001587

The Formlabs Fuse Series Printer Stand is a purpose-built

steel stand designed to position the Fuse 1 and Fuse 1+ 30W SLS printers at an

ergonomic..

361€

Ex Tax:298€

Brand: Unitree

Product code: G1-AIR

The Unitree G1 AIR is a compact, fully electric humanoid robot engineered for professional demonstrations, live events, and remote-operated showcases...

24 805€

Ex Tax:20 500€

Brand: Unitree

Product code: 00-00013727

The Unitree G1 Edu Standard-U1 is a compact humanoid robot engineered for advanced artificial intelligence research and educational robotics, featurin..

35 000€

Ex Tax:28 926€

Brand: Unitree

Product code: 00-00013725

The Unitree G1 Edu Flagship C-U5 is a full-scale humanoid robot engineered for advanced AI research, embodied intelligence, and real-world autonomous ..

69 900€

Ex Tax:57 769€

Brand: Unitree

Product code: 00-00013724

The Unitree G1 Edu Flagship B-U4 is a full-size humanoid research robot built for universities, R&D laboratories, and advanced robotics programs, ..

62 900€

Ex Tax:51 983€

Brand: Unitree

Product code: 00-00013722

The Unitree G1 Edu Advanced-U2 is a research-grade humanoid robot with 29 degrees of freedom built for universities, R&D laboratories, and advance..

41 900€

Ex Tax:34 628€

Brand: Unitree

Product code: 00-00013723

The Unitree G1 Edu Flagship A-U3 is a research humanoid robot designed for advanced robotics laboratories and embodied AI development, featuring 43 de..

53 900€

Ex Tax:44 545€

Brand: Unitree

Product code: 00-00013726

The Unitree G1 Edu Flagship D-U6 is a humanoid research robot with 41 degrees of freedom, engineered for embodied artificial intelligence development ..

69 000€

Ex Tax:57 025€

Brand: Unitree

Product code: RB-G1-U7-EDU

The UNITREE G1-U7 EDU is a full-size humanoid robot designed for academic research, industrial automation, and advanced AI development, standing 1,270..

64 900€

Ex Tax:53 636€

Brand: Unitree

Product code: G1-U8-EDU

The Unitree G1-U8 EDU is a full-scale humanoid robot designed for robotics research, advanced education, and industrial automation prototyping. Standi..

58 000€

Ex Tax:47 934€

Brand: Unitree

Product code: G1-U9-EDU

The UNITREE G1-U9 EDU is a full-scale humanoid research robot built for advanced academic and industrial applications, standing 1,320 mm tall and weig..

59 000€

Ex Tax:48 760€

Brand: Unitree

Product code: G1-U10-EDU

The Unitree G1-U10 EDU is a full-scale research humanoid robot engineered for AI-driven imitation learning, advanced manipulation research, and autono..

54 000€

Ex Tax:44 628€

Brand: Unitree

Product code: G1-COMP-EDU

The Unitree G1-Comp EDU is a full-size bipedal humanoid robot

engineered specifically for competitive robotics and advanced university

research, s..

46 900€

Ex Tax:38 760€

Brand: Unitree

Product code: 00-00013716

Unlock the full potential of

integrated artificial intelligence with the

Unitree Go2 Edu Intelligent U2 Robotic Dog, a

cutting-edge quadruped pl..

17 500€

Ex Tax:14 463€

Brand: Unitree

Product code: 00-00013717

Unitree Go2 Intelligent Laser U3 Robotic Dog: The Future of AI Robotics

Unlock the full

potential of artificial intelligence with the Unitree Go..

18 900€

Ex Tax:15 620€

Brand: Unitree

Product code: 00-00013715

Discover the Unitree Go2 Edu Flagship U4 Robotic Dog

Unlock the full potential of integrated artificial intelligence with the

Unitree Go2 E..

20 495€

Ex Tax:16 938€

Brand: PUDU

Product code: 00-00014079

PUDU MT1 Max Autonomous AI Industrial Sweeping Robot

PUDU MT1 Max is a next-generation industrial cleaning robot designed to automate sweeping tasks ..

24 079€

Ex Tax:19 900€

Brand: PUDU

Product code: 00-00014081

PUDU CC1 Pro Intelligent AI Cleaning Robot

PUDU CC1 Pro is an advanced autonomous cleaning solution designed to handle full-cycle floor maintenance i..

24 200€

Ex Tax:20 000€

Brand: PUDU

Product code: 00-00014080

PUDU CC1 Black Pro Intelligent AI Cleaning Robot

PUDU CC1 Black Pro is an upgraded version of the CC1 series, designed for fully autonomous floor mai..

25 410€

Ex Tax:21 000€

Brand: PUDU

Product code: 00-00014083

PUDU BellaBot Pro (White) Smart Delivery & Advertising Robot

PUDU BellaBot Pro (White) is a modern autonomous service robot created for restauran..

13 310€

Ex Tax:11 000€

Brand: PUDU

Product code: 00-00014082

PUDU BellaBot Pro (Black) Smart Delivery & Advertising Robot

PUDU BellaBot Pro (Black) is a modern autonomous service robot created for restauran..

13 310€

Ex Tax:11 000€

Brand: Unitree

Product code: G1-AIR

The Unitree G1 AIR is a compact, fully electric humanoid robot engineered for professional demonstrations, live events, and remote-operated showcases...

24 805€

Ex Tax:20 500€

Brand: Unitree

Product code: 00-00013727

The Unitree G1 Edu Standard-U1 is a compact humanoid robot engineered for advanced artificial intelligence research and educational robotics, featurin..

35 000€

Ex Tax:28 926€

Brand: Unitree

Product code: 00-00013725

The Unitree G1 Edu Flagship C-U5 is a full-scale humanoid robot engineered for advanced AI research, embodied intelligence, and real-world autonomous ..

69 900€

Ex Tax:57 769€

Brand: Unitree

Product code: 00-00013724

The Unitree G1 Edu Flagship B-U4 is a full-size humanoid research robot built for universities, R&D laboratories, and advanced robotics programs, ..

62 900€

Ex Tax:51 983€

Brand: Unitree

Product code: 00-00013722

The Unitree G1 Edu Advanced-U2 is a research-grade humanoid robot with 29 degrees of freedom built for universities, R&D laboratories, and advance..

41 900€

Ex Tax:34 628€

Brand: Unitree

Product code: 00-00013723

The Unitree G1 Edu Flagship A-U3 is a research humanoid robot designed for advanced robotics laboratories and embodied AI development, featuring 43 de..

53 900€

Ex Tax:44 545€

Brand: Unitree

Product code: 00-00013726

The Unitree G1 Edu Flagship D-U6 is a humanoid research robot with 41 degrees of freedom, engineered for embodied artificial intelligence development ..

69 000€

Ex Tax:57 025€

Brand: Unitree

Product code: RB-G1-U7-EDU

The UNITREE G1-U7 EDU is a full-size humanoid robot designed for academic research, industrial automation, and advanced AI development, standing 1,270..

64 900€

Ex Tax:53 636€

Brand: Unitree

Product code: G1-U8-EDU

The Unitree G1-U8 EDU is a full-scale humanoid robot designed for robotics research, advanced education, and industrial automation prototyping. Standi..

58 000€

Ex Tax:47 934€

Brand: Unitree

Product code: G1-U9-EDU

The UNITREE G1-U9 EDU is a full-scale humanoid research robot built for advanced academic and industrial applications, standing 1,320 mm tall and weig..

59 000€

Ex Tax:48 760€

Don’t have an account yet?

✕

This website uses cookies

We use cookies and other tracking technologies to improve your browsing experience our website, to personalize content and ads, provide social features media and our traffic analysis.

Necessary cookies enable core functionality of the website. Without these cookies the website can not function properly.

Statistic cookies help website owners to understand how visitors interact with websites by collecting and reporting information anonymously.

A set of cookies to collect information and report about website usage statistics without personally identifying individual visitors to Google.

Marketing cookies store user data and behaviour information, which allows advertising services to target more audience groups.

|